Search Engine Basics: How Crawling, Indexing, and Ranking Work | ClusterMagic

Why Marketers Need to Understand Search Engine Basics

You do not need to be an engineer to make smart SEO decisions, but you do need to understand how search engines work. Search engine basics are the foundation of every content and SEO decision your team makes, from choosing topics to structuring pages to building internal links. When you understand what happens between the moment someone hits "publish" and the moment a page appears in search results, you stop guessing and start making decisions grounded in how the system actually operates.

This guide covers the three core stages every search engine runs through: crawling, indexing, and ranking. By the end, you will have a clear mental model you can use to evaluate your content strategy, troubleshoot visibility problems, and prioritize the right work.

Search Engine Basics: How Crawling Works

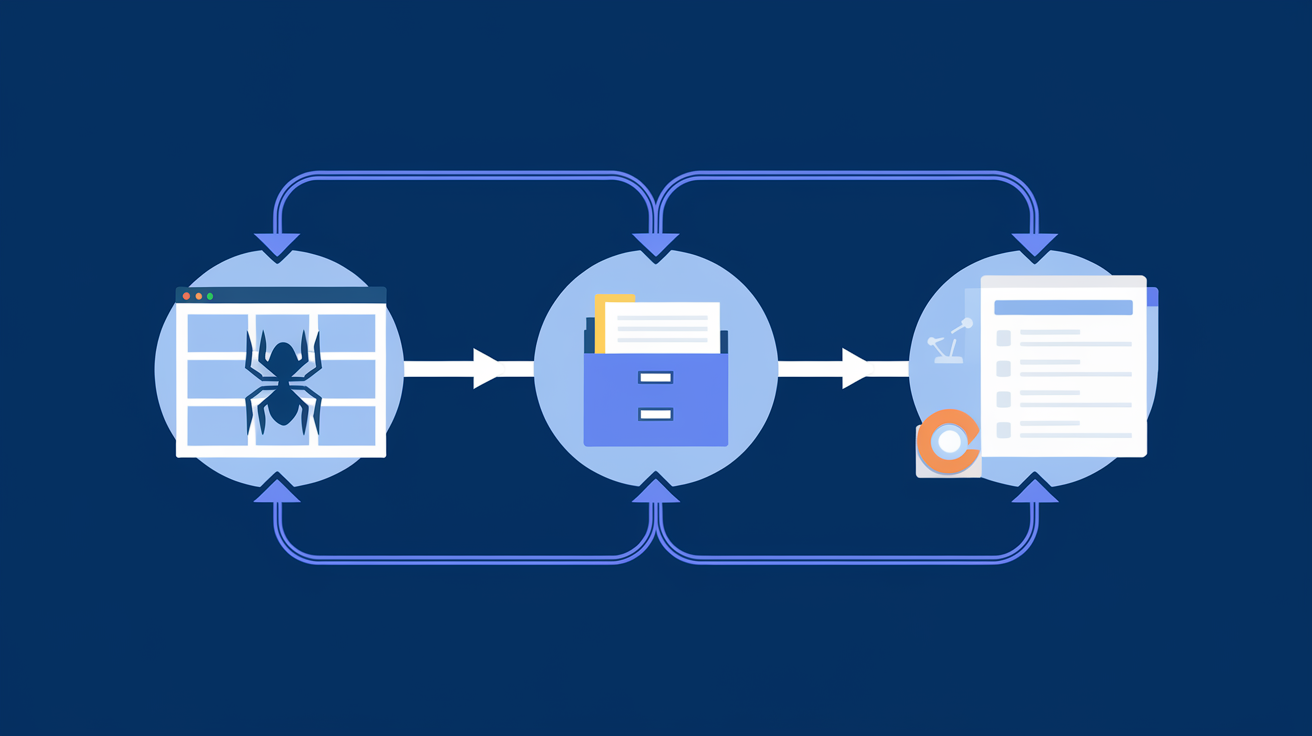

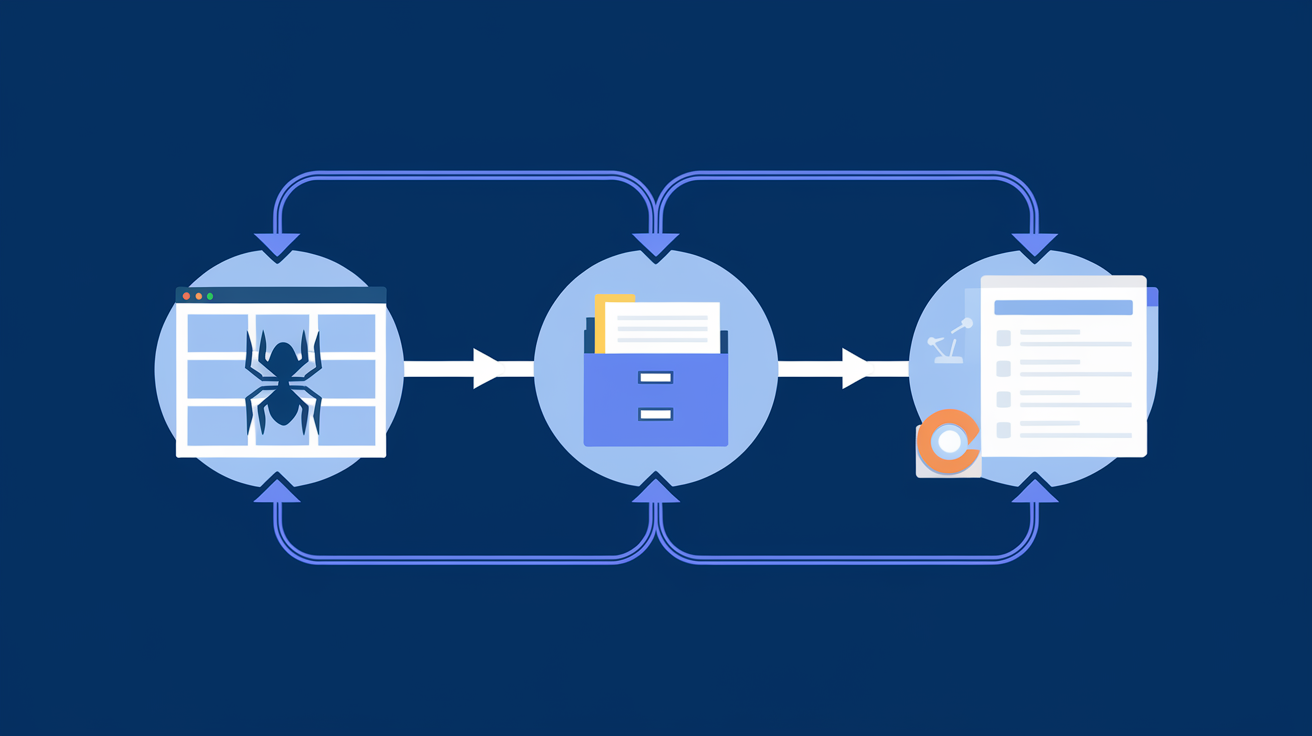

Crawling is how a search engine discovers content on the web. Google sends out automated programs called crawlers (the most well-known is Googlebot) that follow links from page to page across the internet. Every time a crawler visits a URL, it reads the page's content and follows any outbound links it finds, which leads it to more pages.

Crawl budget is a concept every content team should know. Google does not crawl every page on your site every day. It allocates a finite amount of crawl activity to each domain based on signals like site authority, server speed, and how often content changes. If your site has thousands of low-quality or duplicate pages, Googlebot may spend its budget on those instead of your best content.

Three things help you guide crawlers to the right pages. First, a well-structured XML sitemap tells Google which URLs you want crawled and how often they change. Second, your internal linking structure signals which pages are important by showing how often they are linked to from other pages on your site. Third, the robots.txt file tells crawlers which areas of your site to skip entirely.

You can learn more about how Google's crawlers work directly from Google Search Central's crawling and indexing documentation, which explains the different crawler types and how they decide what to fetch. The technical SEO for content teams guide covers these crawl management concepts in more depth. For content teams, the practical takeaway is straightforward: make your important pages easy to find by linking to them consistently and keeping your sitemap current.

How Indexing Works

Once a crawler fetches a page, the search engine processes it and decides whether to add it to its index. The index is essentially a massive database of web pages that the search engine can retrieve when someone performs a query. Being indexed is a prerequisite for ranking. If a page is not in the index, it does not exist as far as search results are concerned.

Not every page that gets crawled ends up indexed. Google may choose to exclude a page for several reasons. Thin or duplicate content is one of the most common. If a page closely resembles another page on your site or across the web, Google may index only one version or none at all. Crawl errors, slow load times, and pages blocked by a noindex tag are other frequent causes.

The indexing process also involves rendering. Modern websites rely heavily on JavaScript to display content, and Google has to render the page (essentially run the JavaScript) to see what a user would actually see. If critical content is loaded dynamically and rendering fails or is delayed, that content may not get indexed properly.

Content teams run into indexing problems more often than they realize. A page might get published, shared, and even linked to, but still not appear in search results because of a technical issue. Checking Google Search Console's Coverage report regularly tells you which pages are indexed, which are excluded, and why. Understanding the difference between a crawl and an index is the key insight here: crawling is discovery, indexing is acceptance.

How Google Ranks Pages

Ranking is where things get complicated, and where most of the SEO conversation lives. Once a page is indexed, Google evaluates it against hundreds of signals to determine where it should appear for any given query. Google's own explanation of how search works describes the goal simply: return the most relevant, reliable result for what the user is looking for.

The two broadest categories of ranking signals are relevance and authority. Relevance is about how well a page matches what someone is searching for. Authority is about how trustworthy and credible the source is, often measured by the quality and quantity of links pointing to it from other sites.

Relevance signals include the words on the page, how they are organized, whether they match the search intent behind the query, and how comprehensively the page covers the topic. A page about "email marketing tips" that only covers one tip superficially will rank below a page that addresses the topic thoroughly and in a way that matches what searchers actually want to know.

Authority signals are more external. When other reputable sites link to your page, they are effectively vouching for it. Google interprets these backlinks as votes of confidence. Not all links are equal: a link from a high-authority publication in your industry carries more weight than a link from a low-traffic directory site. Building authority takes time, which is why newer sites often struggle to rank even when their content is excellent.

A third category worth naming is user experience signals. Page speed, mobile-friendliness, and how users interact with a page after clicking through from search results all feed into how Google evaluates quality. If users consistently land on a page and immediately return to search results (a behavior sometimes called "pogo-sticking"), that is a signal that the page did not satisfy the query. For a deeper look at the full landscape of ranking factors, Moz's Beginner's Guide to SEO provides a well-organized overview.

What This Means for Content Teams

Understanding the three-stage pipeline changes how you approach content work. Most teams spend the majority of their time on creation and very little time on the structural and strategic decisions that actually determine whether content ranks. Here is what the crawling, indexing, and ranking model implies for your workflow.

Start with keyword and topic clarity before you write. If you do not know what query you are trying to rank for, you cannot write a page that is relevantly matched to it. A keyword mapping guide helps you assign specific keywords to specific pages so that nothing competes with itself and every page has a clear job to do. This maps directly onto Google's relevance signals.

Build your content around topical depth, not just individual articles. Google rewards sites that cover subjects comprehensively. A single blog post rarely outranks a site that has built a full cluster of related content around a topic. This is the concept of topical authority: the more completely your site covers a subject, the more likely Google is to trust it as a reliable source across related queries.

Internal linking is more important than most content teams treat it. Every time you link from one page to another on your site, you are doing two things: helping crawlers find the linked page and passing a signal that the linked page is worth visiting. A well-planned internal linking strategy ensures your most important pages collect the most internal link equity, which supports both crawl efficiency and ranking authority.

Finally, publishing is not the finish line. Once a page goes live, it needs to be indexed, it needs to earn authority over time, and it may need to be updated as search intent or competition shifts. Content maintenance is part of the SEO workflow, not an afterthought. The SEO fundamentals for marketers guide covers how to build these review cycles into a sustainable practice. Teams that build review cycles into their content calendar consistently outperform teams that treat publishing as the end of the process.

If you are not sure how these pieces fit together into a coherent strategy, What Is an SEO Content Strategy? walks through how to build a plan that accounts for crawlability, topical coverage, and ranking goals from the start.

Putting It All Together

Search engines follow a logical process: they discover pages by crawling, evaluate and store them through indexing, and sort them for users through ranking. Each stage has its own rules, and each stage is a place where content can succeed or fail. Knowing where in the pipeline a problem lives tells you exactly what to fix, whether that is a sitemap issue, a thin-content problem, or a gap in your authority-building efforts. For a broader overview of how these concepts connect, the organic searches explained guide covers the full journey from query to click.

For content teams, this knowledge is not academic. It is the difference between publishing and hoping versus publishing with a clear understanding of what needs to happen next. When you align your content decisions with how search engines actually operate, you give every piece you create a real path to visibility.

If you want to see how ClusterMagic can help you build content clusters that align with the way Google crawls, indexes, and ranks content, book a walkthrough and we will show you how it works.

.svg)